Plugin Settings

Model Context Size

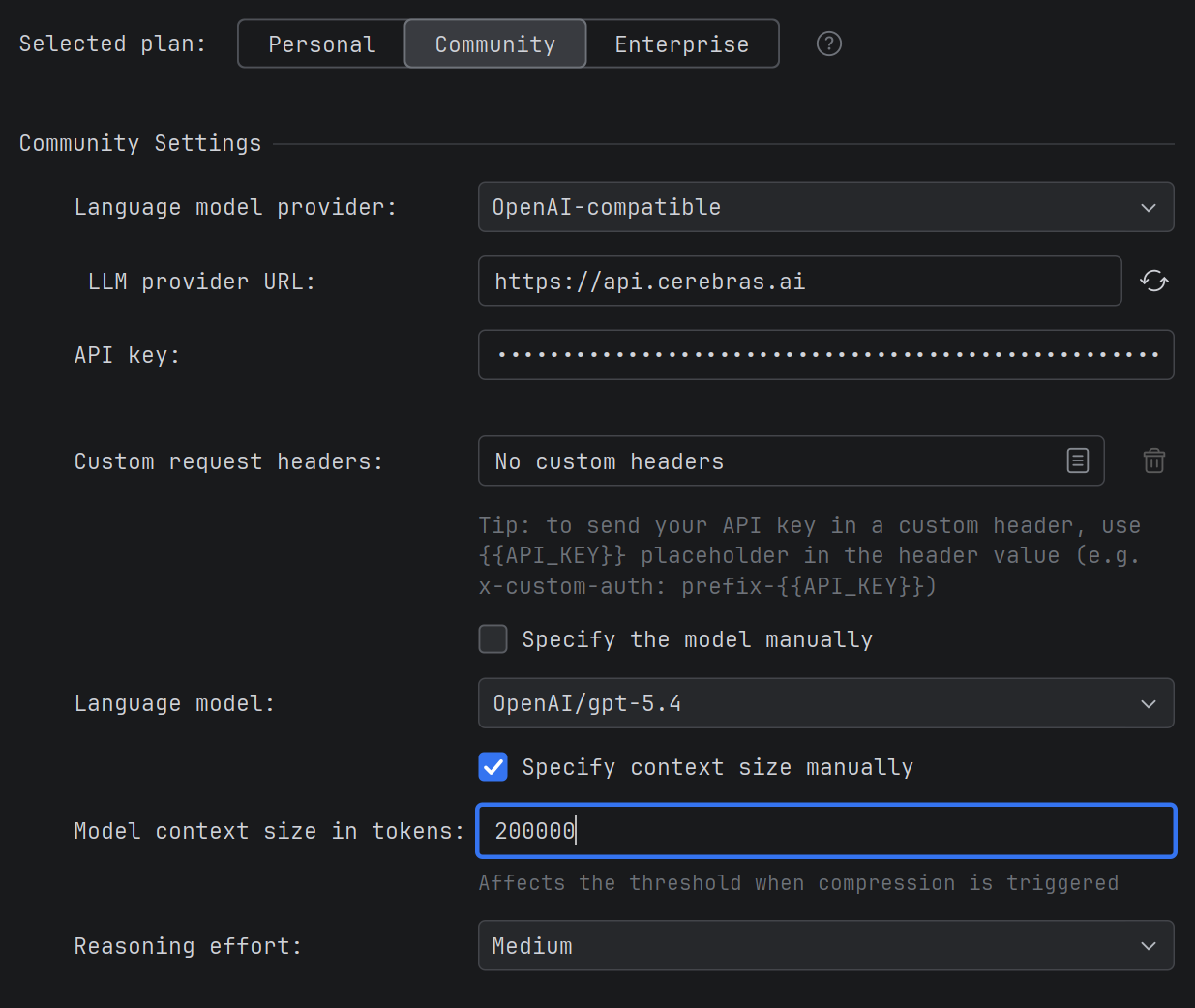

For models connected via OpenAI-compatible mode, a field is available for manually specifying the context size.

Previously, the plugin couldn't always correctly determine the context limit for such models, resulting in incorrect values displayed in the interface. You can now set the exact context size manually, and the plugin will correctly manage the size of requests sent.

When It's Useful

- You're using OpenAI-compatible models (e.g., Qwen, DeepSeek) with a non-standard context size.

- You need the plugin to correctly calculate limits when sending requests and compressing the chat.