Explyt 5.3: New Agent Modes and LLM Choice for Personal Use

EXPLYT TEAM

28.12.2025

4 MINUTES

Explyt 5.3 introduces powerful updates for individual developers, including LLM selection in Settings for Personal plan users, new Agent modes, and UI improvements.

Model Selection in Settings for Individual Users

Individual developers can now choose the LLM model they want to use with the agent.

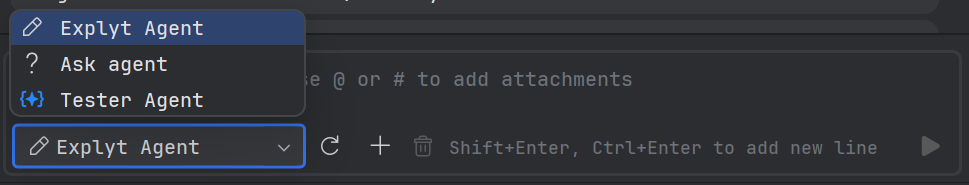

New Agent Modes

We have added three modes, and plan to implement more in the coming months.

Agent — a full-featured agent that can read and edit files, identical to the previous default chat mode. It is best suited for day-to-day work unrelated to project exploration or testing.

Ask — a read-only agent mode. It can read project files and browse web pages, making it well-suited for project exploration (for example, onboarding to a new codebase), brainstorming implementation ideas, and analyzing the causes of bugs.

Tester — a mode focused on test generation. While tests can also be generated in Agent mode, Tester is designed to deliver more accurate and consistent results.

For example, in our internal benchmark of 33 real projects (endpoints of ~2,000 lines, ≥150 KLOC, using Spring, TestContainers, Java, and Kotlin), with all other factors being equal (the same model and the same input prompt), the Tester agent produced significantly better results than the standard Agent mode.

| Test generation task from source code | Agent | Tester |

|---|---|---|

| Percentage of compiled test classes | 76% | 94% |

| Percentage of passing tests | 67% | 90% |

| Test coverage | 64% | 80% |

The Tester mode is currently only available in IntelliJ IDEA (and its forks) for Java and Kotlin.

Smooth AI Chat Display

We improved the UI by making the display of AI-generated text smoother, reducing eye strain.

Agent Edit Tool: Reliability Boost

As part of our continuous improvement of our AI agent, we have implemented significant enhancements to the file editing tool (edit_tool) to increase its reliability and success rate. We want to share with you benchmark results demonstrating a noticeable improvement in agent effectiveness.

Technical benchmark results show statistically significant improvement in key metrics. File editing success rate increased by 8.4% in small tasks (from 82.47% to 89.47%), by 4.86% in complex tasks (from 92.54% to 97.06%), and by 6.42% in test generation (from 90.55% to 96.36%). The agent has also become more reliable in following requirements and violates explicit user instructions less frequently.

For developers and testers, this means more predictable and safe interaction with the agent. The agent now makes fewer unintended changes, causes less code damage, and more frequently completes tasks successfully. The improved reliability of the editing tool reduces time spent debugging and fixing errors introduced by the agent.

Install the update now and see how Explyt transforms the way you build, test, and fix code.

LATEST NEWS

Explyt 5.9: Subagents and Orchestration, Global Skills and Smarter Collaboration for Real Development Work

Explyt 5.8: How We Taught an AI Agent to Debug, Refactor, and Use Run Configurations in the IDE